The Recruitment Agency's Guide to Standardising Candidate Assessments Across Clients

Table of Contents

Introduction

In the competitive and fast-evolving world of recruitment, consistency is no longer a nice-to-have—it’s a strategic imperative. Yet, many agencies still approach candidate assessment as a bespoke, client-by-client exercise: tweaking questions, adjusting criteria, and reinventing the wheel for every requisition. This ad-hoc approach might feel flexible, but it comes at a steep cost: inconsistent evaluations, biased outcomes, wasted recruiter time, and eroded trust with both clients and candidates. The truth is, while every role has unique nuances, the foundation of effective assessment doesn’t need to be reinvented each time. By standardising core assessment methodologies—without sacrificing the ability to tailor to specific needs—agencies can dramatically improve the reliability, fairness, and efficiency of their screening process. Standardisation doesn’t mean rigidity; it means building a robust, evidence-based framework that enhances recruiter expertise, reduces costly errors, and turns assessment from a gamble into a repeatable, scalable advantage. This guide isn’t about turning recruiters into robots or forcing a one-size-fits-all mentality. It’s about creating a shared language and set of tools that ensure every candidate is evaluated against clear, defensible criteria—while still allowing room for the nuanced judgment that only experienced recruiters can provide. Whether you’re a boutique firm serving niche industries or a mid-sized agency handling high-volume requisitions, standardising your assessments will help you deliver higher-quality shortlists, build stronger client relationships, and protect your reputation in an increasingly discerning market. In this article, we’ll walk you through exactly how to standardise candidate assessments across clients—step by step, tool by tool. We’ll explore why standardisation matters, what elements to standardise (and what to keep flexible), how to build a flexible framework that works for diverse roles, and how to implement it in a way that gains buy-in from recruiters, hiring managers, and clients alike. By the end, you’ll have a practical blueprint to transform your assessment process from a source of inconsistency into your agency’s most reliable competitive advantage.

Why Standardising Assessments Matters: The Hidden Costs of Ad-Hoc Evaluation

Before diving into the "how," it’s essential to understand why the lack of standardisation is more than just an inconvenience—it’s a systemic risk that undermines quality, fairness, and efficiency.

1. Inconsistent Evaluation Undermines Reliability and Fairness

When each recruiter (or hiring manager) uses their own criteria, questions, and scoring methods, the assessment process becomes inherently unreliable.

- The Problem: Two equally qualified candidates might receive vastly different scores depending on who screened them, what questions were asked, or what factors the evaluator happened to focus on that day. One recruiter might prioritise years of experience, another specific project details, another communication style inferred from the resume—leading to noisy, unpredictable outcomes.

- The Consequence: This inconsistency makes it nearly impossible to trust that the shortlist represents the best available talent. It opens the door to unconscious bias (e.g., favoring candidates from certain schools or backgrounds) and makes it hard to defend decisions if questioned. In a market where clients increasingly demand transparency and fairness, unreliable assessment is a liability.

2. Wasted Recruiter Time and Reinventing the Wheel

Ad-hoc assessment forces recruiters to spend unnecessary time designing questions, scoring rubrics, and evaluation methods from scratch for every requisition—time that could be spent on higher-value activities.

- The Problem: Instead of leveraging proven, validated tools, recruiters often create custom questions on the fly, leading to reinvention of effort across similar roles (e.g., writing a new "problem-solving" question for every junior developer role).

- The Consequence: This is a significant drain on recruiter productivity. Time spent crafting assessments is time not spent building relationships, assessing motivation, or providing strategic counsel. Over time, this inefficiency adds up—reducing the number of requisitions a recruiter can handle effectively and increasing the risk of burnout.

3. Poor Candidate Experience and Perceived Unfairness

Candidates notice when the assessment process feels arbitrary, inconsistent, or irrelevant to the role.

- The Problem: If one candidate is asked a complex case study while another gets only a resume review—or if questions feel disconnected from the actual job—the process can feel unfair or opaque. Candidates may perceive favouritism, feel confused about what’s being evaluated, or doubt the agency’s professionalism.

- The Consequence: This damages the candidate experience, increases drop-off rates, and harms the agency’s employer brand. In a market where top talent has choices, a perception of unfairness can deter high-quality applicants and make future sourcing harder and more expensive.

4. Difficulty in Measuring and Improving Performance

Without standardisation, it’s nearly impossible to learn from past placements or improve the assessment process over time.

- The Problem: If every assessment is different, you can’t compare results across requisitions, identify patterns in what predicts success, or refine your tools based on outcomes. You’re flying blind—repeating the same mistakes without knowing it.

- The Consequence: This prevents the agency from building institutional knowledge, improving prediction accuracy, or demonstrating the validity of its screening process to clients. It also makes it harder to audit for bias or fairness, as there’s no consistent data to analyse.

5. Client Distrust and Micromanagement

When assessment feels opaque or inconsistent, clients lose confidence in the agency’s ability to deliver quality talent.

- The Reality: Clients don’t just want candidates—they want confidence. They want to know that the assessment is rigorous, objective, and aligned with their actual needs—not just a reflection of the recruiter’s gut feeling or bias.

- The Consequence: After repeated experiences of inconsistent or seemingly arbitrary assessments, clients often respond by imposing stricter controls: demanding more interview rounds, insisting on participating in early screens, or requiring additional assessments—all of which slow down the process and increase friction. Trust, once eroded, is incredibly hard to rebuild—and in the referral-driven world of recruitment, reputational damage spreads fast.

What to Standardise (and What to Keep Flexible)

Standardisation doesn’t mean treating every role the same. It means identifying the core elements of assessment that can be made consistent across clients and roles—while preserving flexibility for the unique nuances that matter.

✅ What to Standardise: The Foundation of Reliable Assessment

These are the elements that should be consistent across clients and roles to ensure fairness, comparability, and efficiency.

1. Assessment Methodology and Framework

- What to Standardise: The overall approach to assessment—e.g., using a combination of eligibility gates, skill tests, structured interviews, and work samples.

- Why: Ensures that every candidate goes through a similar, evidence-based process, reducing variability in how rigorously they’re evaluated.

- How: Define a standard assessment funnel (e.g., Application → Eligibility Gate → Skill Test → Structured Interview → Work Sample → Final Review) that can be adapted but not fundamentally changed for each role.

2. Core Competency Categories (With Role-Specific Definitions)

- What to Standardise: A core set of universal competencies that are relevant across most roles (e.g., Problem Solving, Communication, Learnability, Collaboration, Integrity, Motivation/Fit).

- Why: Provides a common language for evaluation while allowing the definition and weighting of each competency to be tailored to the role.

- How: Create a competency library where each competency has a standard definition (e.g., "Problem Solving: The ability to analyse complex situations, identify root causes, and develop effective solutions"). For each role, recruiters select which competencies are relevant and define what "good" looks like in context (e.g., for a dev role: "Debugging a failing API endpoint"; for a sales role: "Handling a customer objection").

3. Standardised Assessment Tools and Formats

- What to Standardise: The types of tools used for each competency (e.g., situational judgment tests for decision-making, coding challenges for technical skills, structured interviews for behavioural traits).

- Why: Ensures that similar competencies are assessed in similar ways, making results more comparable and reducing the risk of invalid or biased methods.

- How: Build a toolkit of validated, role-agnostic assessment formats:

- Knowledge/Skills: Standardised tests, work samples, or technical challenges (e.g., a debugging exercise, a data analysis task).

- Behavioral Traits: Structured interview guides based on the STAR (Situation, Task, Action, Result) method or situational judgment tests (SJTs).

- Cognitive Abilities: Validated, fair ability tests (numerical, logical, verbal) where job-relevant.

- Motivation/Fit: Standardised questions about career goals, interest in the specific role/company, and alignment with values.

4. Standardised Scoring and Rating Scales

- What to Standardise: The rating scale used (e.g., 1-5 or 1-10) and the structure of behavioral anchors for each score point.

- Why: Ensures that scores are comparable across candidates and roles, and that evaluators are applying criteria consistently.

- How: Define clear, behaviorally anchored rating scales for each competency. For example, for "Communication":

- 1 = "Unable to convey basic ideas clearly"

- 3 = "Communicates ideas adequately but lacks clarity or conciseness"

- 5 = "Communicates complex ideas clearly, persuasively, and tailored to the audience"

- Key: Avoid vague scales like "poor/fair/good/excellent"—instead, tie each score to observable behaviors.

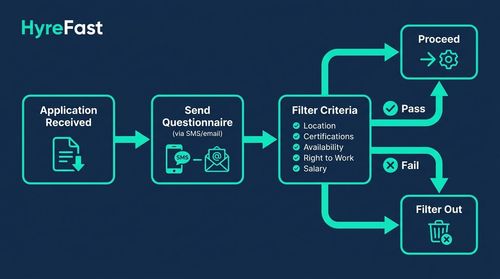

5. Standardised Eligibility Gates (Knockout Criteria)

- What to Standardise: The use of automated, objective knockout questionnaires to filter out candidates who fail non-negotiable criteria before any human assessment begins.

- Why: Ensures that all candidates are held to the same baseline standards (location, certifications, availability, right to work, salary expectations) and prevents wasted time on objectively unqualified profiles.

- How: Create a library of standard eligibility questions for common non-negotiables (e.g., "Are you authorized to work in [Location] without sponsorship?", "Do you have [X] years of experience with [Skill]?", "What is your expected salary?"). These are triggered automatically upon application.

6. Standardised Process for Calibration and Feedback

- What to Standardise: Regular calibration sessions for interviewers and structured post-placement debriefs to learn and improve.

- Why: Ensures that evaluators are applying the scoring rubrics consistently over time and that the agency learns from outcomes.

- How: Require that before starting assessments for a role type, all interviewers (recruiters, hiring managers, panelists) score a few sample responses together using the standard rubric to align on what each score point means. After placement (especially if it doesn’t work out), conduct a debrief: What did our assessment predict correctly? What did it miss? How can we improve?

🔁 What to Keep Flexible: Tailoring to Role and Client Nuances

These are the elements that should remain adaptable to ensure the assessment is relevant, valid, and valuable for each specific context.

1. Role-Specific Competency Selection and Weighting

- What to Keep Flexible: Which core competencies are relevant for the role, and how much each should weigh in the overall evaluation.

- Example: For a data scientist role, "Problem Solving" and "Technical Skills (Python/SQL)" might be weighted highly, while for a customer service role, "Communication" and "Empathy" might take precedence.

- How: Use the competency library to select and weight relevant competencies based on the role’s success profile (defined via role discovery with the hiring manager).

2. Specific Assessment Content and Scenarios

- What to Keep Flexible: The exact questions, case studies, or scenarios used in assessments—tailored to the role’s actual tasks and challenges.

- Example: A situational judgment test for a project manager might focus on handling scope creep, while one for a nurse might focus on patient communication under stress.

- How: Build a library of role-specific assessment content (e.g., coding challenges for dev roles, call simulations for support roles, case studies for analysts) that plugs into the standardised formats.

3. Depth and Focus of Assessment

- What to Keep Flexible: How deeply to assess each competency based on the role’s requirements and seniority level.

- Example: An entry-level role might focus on foundational skills and learnability, while a senior role might emphasize strategic thinking, leadership, and stakeholder management.

- How: Adjust the complexity, duration, and focus of assessments based on the role’s success profile and the hiring manager’s input.

4. Client-Specific Values and Cultural Fit Criteria

- What to Keep Flexible: How to assess alignment with the client’s specific values, work style, or team dynamics.

- Example: One client might prioritise "innovation and risk-taking," another "process and precision," another "collaboration and humility."

- How: Include standardised questions about values and work style, but allow the hiring manager to define what "good" looks like in their context (e.g., "Describe a time you challenged the status quo" for an innovative culture).

Building Your Standardised Assessment Framework: A Step-by-Step Blueprint

You don’t need to boil the ocean. Start where you can get the most impact and build momentum.

Phase 1: Foundational Work – Define Your Core Assessment Elements (Weeks 1-2)

- Build Your Competency Library:

- Identify a core set of universal competencies relevant to most roles you recruit for (e.g., Problem Solving, Communication, Collaboration, Learnability, Integrity, Motivation/Fit).

- For each, write a clear, behaviorally anchored definition (e.g., "Learnability: The ability to quickly acquire new knowledge and skills, adapt to changing requirements, and apply learning effectively").

- Select Your Standardised Assessment Formats:

- Decide on the types of tools you’ll use for each competency (e.g., SJTs for decision-making, structured interviews for behavioral traits, coding challenges for technical skills).

- Choose validated, fair tools where possible (e.g., established ability tests, well-designed work samples).

- Create Your Standardised Rating Scales:

- For each competency, define a 1-5 or 1-10 scale with clear behavioral anchors for each score point (e.g., for "Problem Solving": 1 = "Unable to identify the problem," 3 = "Identifies problem and suggests a basic solution," 5 = "Identifies root cause, evaluates multiple solutions, selects optimal approach").

- Design Your Standard Eligibility Gate:

- Create a library of standard knockout questions for common non-negotiables (location, certifications, availability, right to work, salary expectations).

- Plan to automate these via SMS/email or ATS triggers before any human assessment begins.

Phase 2: Build Your Assessment Toolkit (Weeks 3-6)

- Develop Role-Specific Assessment Content:

- For each recurring role type/family (e.g., "Junior Java Developer," "Customer Service Agent," "Sales Executive"), build a library of assessment content that plugs into your standardised formats.

- Examples:

- Technical Role: A coding challenge (e.g., "Build a REST API to manage user data") or a debugging exercise.

- Customer Service Role: A call simulation scenario or a situational judgment test on handling angry customers.

- Analyst Role: A data analysis task using a sample dataset (e.g., "Analyze this sales data and identify trends").

- Sales Role: A role-play exercise handling a common objection or a situational judgment test on negotiation.

- Create Standardised Interview Guides:

- For each role type, build a structured interview guide based on the STAR method, tied to your selected competencies.

- Include a mix of behavioral ("Tell me about a time when...") and situational ("What would you do if...") questions.

- Provide space for recruiters to note observations and score each competency using your standardised rubric.

- Set Up Automation for Eligibility Gates:

- Configure your ATS or use tools like Typeform, Google Forms (with Apps Script), or integrated assessment platforms (HireVue, Harver) to automatically send knockout questionnaires upon application and update candidate status based on responses.

Phase 3: Implement, Calibrate, and Train (Weeks 7-10)

- Train Your Team on the Framework:

- Run workshops on your competency library, assessment formats, rating scales, and how to use the role-specific tools.

- Emphasise that standardisation provides a foundation—recruiters still use their judgment to interpret results and assess nuance.

- Practice with role-plays: Have recruiters assess sample candidates using the standardised tools and discuss discrepancies.

- Conduct Calibration Sessions:

- Before going live with a new role type, have recruiters and (if possible) hiring managers score the same sample responses (written or video) using your standardised rubric.

- Discuss discrepancies until you reach consensus on what each score point means (e.g., what does a "4" on Communication actually look like in practice?).

- Repeat calibration periodically to maintain consistency.

- Launch Pilot and Refine:

- Use the standardised framework for a few live requisitions of a role type.

- Track time taken, recruiter feedback, and initial hiring manager impressions.

- Refine the role-specific content, interview guides, or weighting based on feedback.

Phase 4: Embed, Monitor, and Continuously Improve (Ongoing)

- Make it Standard: Require the use of your standardised framework (competency library, assessment formats, rating scales, eligibility gates) for all requisitions of a given type. Integrate it into your ATS workflow if possible.

- Track Metrics: Monitor:

- Time-to-fill (should stabilize or improve as efficiency gains kick in)

- Submission-to-interview ratio (should increase as assessment becomes more accurate)

- Hiring manager satisfaction with shortlists and hires

- Offer acceptance rate

- Early turnover/performance of placed candidates (6-month check-in)

- Recruiter satisfaction with the assessment process (via regular surveys)

- Audit for Bias and Fairness: Regularly review your data:

- Are pass rates similar across demographic groups (gender, ethnicity, education background) for comparable roles?

- Are there surprises in who succeeds vs. who fails?

- Use this to refine your competency definitions, assessment tools, or scoring rubrics.

- Close the Loop with Clients: Share your standardised approach as a value-add: "We use a competency-based, standardised assessment process to ensure we’re evaluating candidates fairly and consistently—so you can trust that our shortlists are based on merit, not bias."

- Continuously Improve: Treat your assessment framework as a living document. Update competency definitions, refine assessment tools, and stay current on best practices in hiring science.

The Role of Technology in Enabling Standardisation

Technology isn’t just helpful for standardisation—it’s often essential for making it scalable, efficient, and effective.

1. Automated Eligibility Gates

- How It Helps: Use SMS/email triggers or integrated ATS features to automatically send knockout questionnaires upon application and filter out candidates who fail objective criteria (location, certifications, availability, right to work, salary expectations).

- Impact: Ensures 100% consistency in applying non-negotiable filters—no human error or bias at the top of the funnel.

2. AI-Powered Skill Matching (With Caution)

- How It Helps: Use NLP-based semantic search (e.g., Sentence-BERT, fine-tuned BERT) to compare resume content to the job description and surface candidates with high conceptual fit—helping to ensure human review time is spent on the most relevant profiles.

- Caution: Always audit these models for bias (e.g., check if scores systematically differ by gender, ethnicity, or educational background for comparable candidates) and use them as a starting point, not a final verdict.

- Impact: Increases the efficiency and relevance of human screening while maintaining a foundation of objectivity.

3. Structured Assessment Platforms

- How It Helps: Use tools like:

- HireVue (for structured video interviews with AI-assisted scoring only for predefined competencies—after bias auditing).

- Codility/HackerRank (for objective code evaluation).

- Harver (for situational judgment tests and ability tests).

- Pymetrics (for neuroscience-based games measuring cognitive and emotional traits).

- Impact: Delivers consistent, bias-resistant evaluations for knowledge, skills, and cognitive/behavioral traits.

4. AI Note-Taking & Summarization

- How It Helps: Use tools like Fireflies.ai, Otter.ai, or built-in features in Zoom/Teams to automatically transcribe and highlight key points from interviews.

- Impact: Frees the recruiter to focus on listening and assessing rather than typing notes, ensuring nothing is missed and enabling fairer post-interview calibration.

5. Integrated ATS/CRM for Tracking and Reporting

- How It Helps: Use your ATS/CRM to:

- Tag candidates by role, competencies assessed, and scores.

- Track assessment completion rates, drop-off points, and time spent.

- Generate reports on submission-to-interview ratio, hiring manager satisfaction, and early turnover.

- Impact: Enables data-driven improvement of your standardised framework and provides transparency to clients.

Conclusion: Standardisation is the Foundation of Trust, Quality, and Scale

Standardising candidate assessments across clients isn’t about removing the recruiter’s expertise or turning hiring into a mechanical process. It’s about respecting the complexity of human judgment by giving it the right tools to succeed. It’s about recognising that while a recruiter’s intuition is valuable, it is far more reliable, fair, and effective when guided by a clear framework, consistent methods, and a commitment to objectivity. By standardising the foundation—your competency library, assessment formats, rating scales, eligibility gates, and calibration processes—you don’t just make assessment more reliable and efficient. You build a foundation of trust with clients and candidates. You show them that you don’t just guess at talent; you measure it against what actually matters for success in their specific context. You turn assessment from a potential liability into a demonstrable strength—a signal that your agency operates with rigor, fairness, and a relentless focus on quality. In the long run, the agencies that win aren’t just those with the biggest databases or the loudest marketing. They’re the ones that clients trust implicitly to deliver not just candidates, but the right candidates—time after time, requisition after requisition. And that trust is built not on luck or charisma, but on the quiet, consistent power of a well-standardised process. Start building yours today. Your reputation—and your bottom line—depend on it.